With the iOS 15 update, Apple introduced a new feature called Live text. This feature lets you use whatever text or number you can find with your iPhone’s viewfinder and make meaningful use of it. The new live text feature in iOS 15 helps in day-to-day tasks, and especially helpful when you are traveling to a place and don’t know their language. In this article, we are going to explain everything about Live text in iOS 15.

Live text is a useful feature that lets you grab text or numbers from your camera’s viewfinder or from a photo that you have already taken. You can use this feature to do many things. It lets you perform things like copy text, search for products or call numbers directly from the Viewfinder itself. This technology is similar to Google’s Lens, which can be used to get the same results. However, this feature is available for iOS devices now and can be used on iPhone and iPad devices that have the latest iOS 15 updates.

What Is Live Text How to Use it in iOS 15 and iPadOS 15

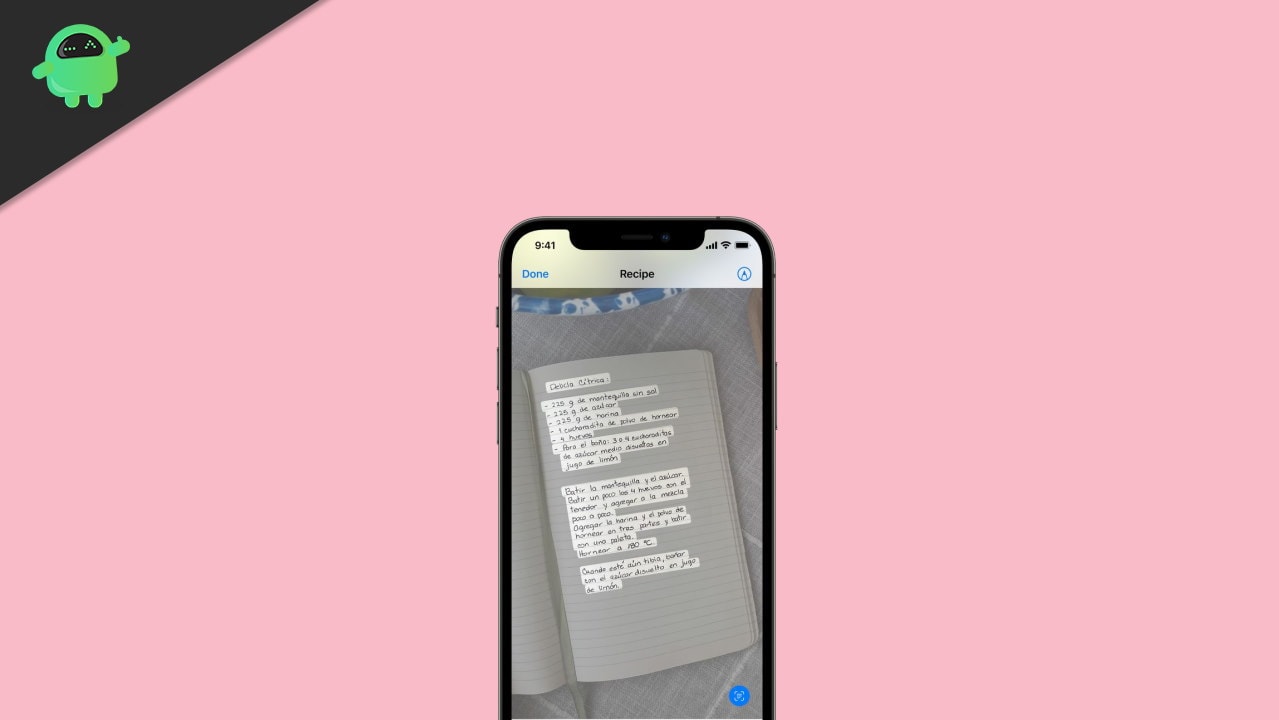

To begin with, the Livetext feature can process images into text. This feature doesn’t stop there, and it expands to Optical Character Recognition as well. This feature can help you to process text from handwriting, say from a notebook.

So if you have written a note on a seminar, and you want to submit it as a word document, you don’t have to retype everything; OCR can let you scan the text and convert them into digital text that you can use on Digital Documents. Livetext can also do much more than that. It’s very contextual aware. Hence it could also be used to scan QR codes.

Realworld use case

Well, these features might tempt you to think, what would be a real-world use case scenario for this feature. Actually, there are many to say, but ill explain some which can help you to understand the real-world use case of this feature.

For instance, you can point your camera to a bank book and copy the account number so that you don’t have to re-check multiple times to ensure it’s the right account no. Or you could simply point out a visiting card and scan the email address or phone number from it.

Then you can easily dial that number or send an email since this feature can take you to the appropriate application straight from the viewfinder.

How to use Live Text Feature on iOS or iPadOS 15

Actually, iOS 15 is currently only in the developer’s hand for beta testing. So the public beta will be out next month for you to get hands-on and test this feature. So once you get access to the beta version of iOS 15, you should be able to find this feature either as a separate application or integrated into the viewfinder. Either way, it’s going to do the same work.

At this point, you should already know that you can only see this feature on iOS 15 or iPad OS 15. But that’s only the software side. For hardware, you might require at least apple’s A12 Bionic chipset. This means you should have at least iPhone X or above or an iPad mini 5th generation and above. The reason is that this Live text utilizes Apple’s Neural Engine found on the A12 bionic chips. So it is not possible to run the application on the older generation of iPhones or iPad such as iPhone 7, 8 or iPad mini.

Conclusion

So as you can see, the Live text feature on iOS or iPad OS 15 will be a huge deal when it comes out to the public. Unlike Google Lens, which uses the Google cloud to compute everything, Livetext is going to run locally on the Neural Engine. This could be beneficial in some ways. Because when using the cloud to do the operations, we depend on a working, fast, and reliable internet connection. Hence when the computations are run locally, you can avoid the need for fast internet. However, it is not appropriate to jump to conclusions now. As results may vary when we have the actual product to test.